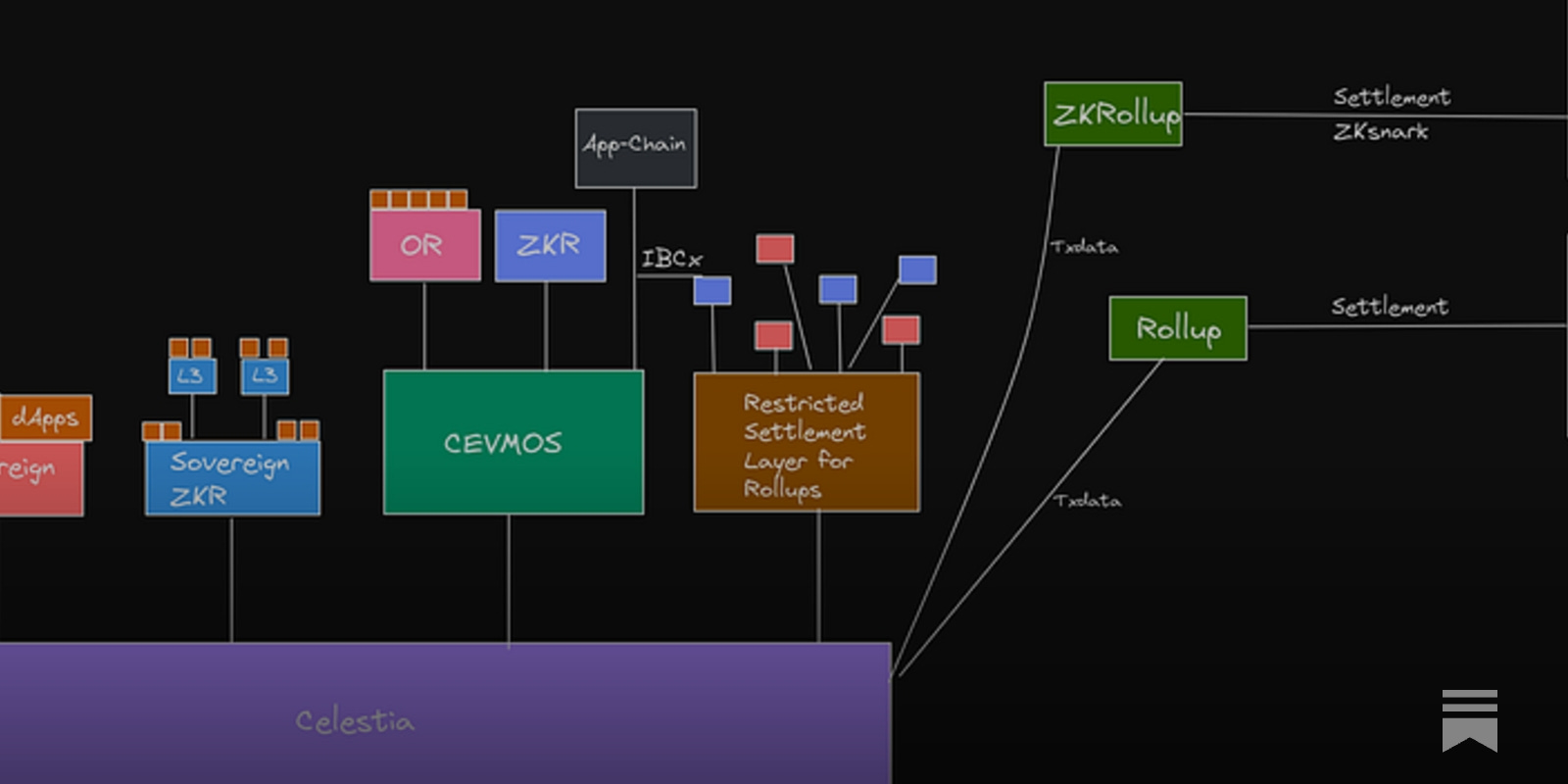

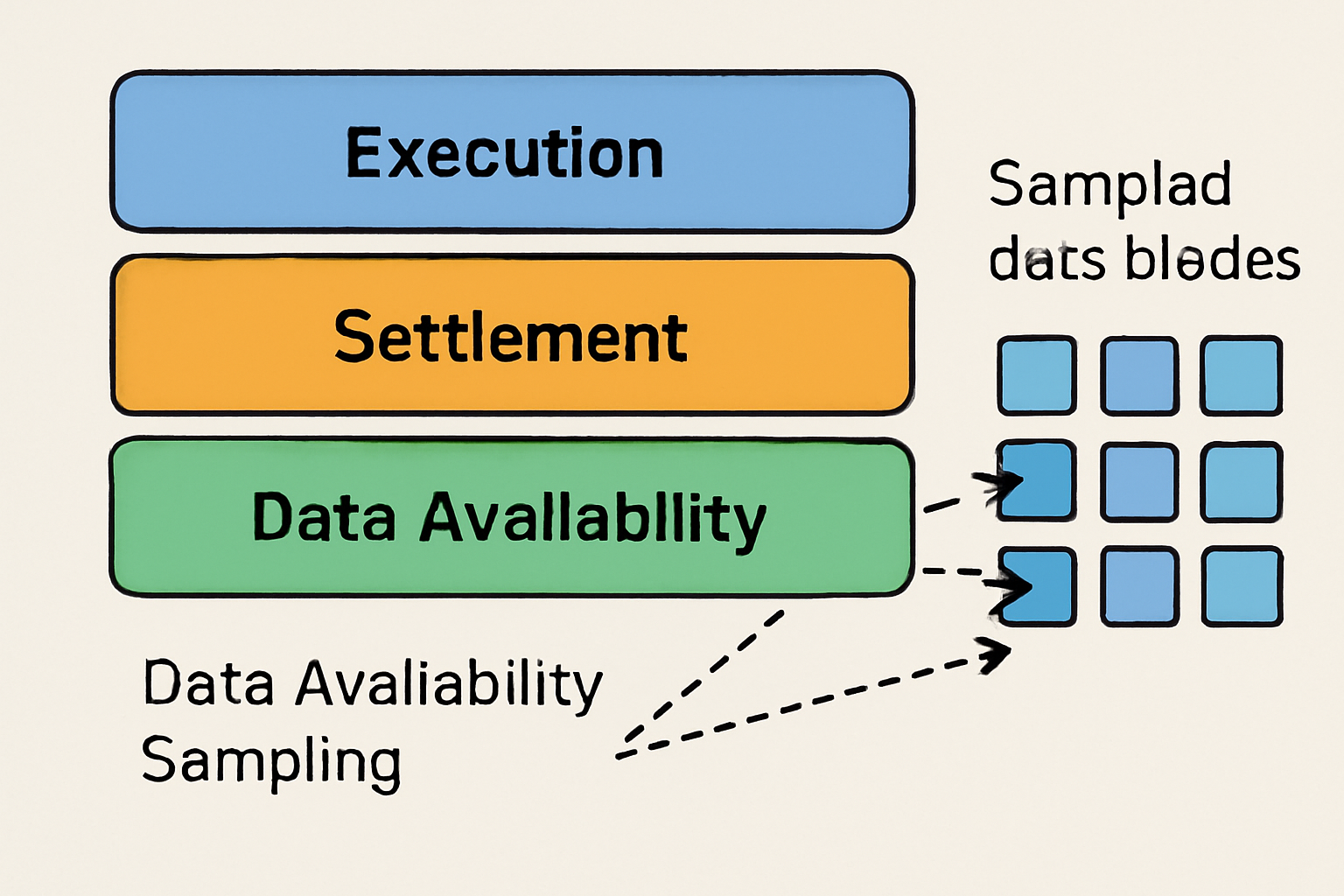

Blockchains have long faced a fundamental trade-off between scalability and security. As decentralized networks grow, the challenge of ensuring that all participants can access and verify the full set of transaction data becomes critical. In traditional monolithic designs, every node must process all transactions and hold the entire blockchain state, leading to bottlenecks in bandwidth, computation, and storage. Modular blockchains disrupt this paradigm by separating execution, settlement, and data availability into distinct layers, enabling new architectures like sharded rollups that dramatically improve throughput without compromising trustlessness.

The Role of Data Availability Sampling in Modular Scalability

At the heart of modular blockchain scalability lies data availability sampling (DAS). Rather than requiring every node to download entire blocks to verify data availability, DAS allows nodes, especially lightweight or resource-constrained ones, to sample small, random portions of block data. If a sufficient number of these samples are available and valid, nodes can be statistically confident that the entire dataset is accessible to the network. This approach significantly reduces bandwidth and storage requirements for individual participants while preserving decentralization.

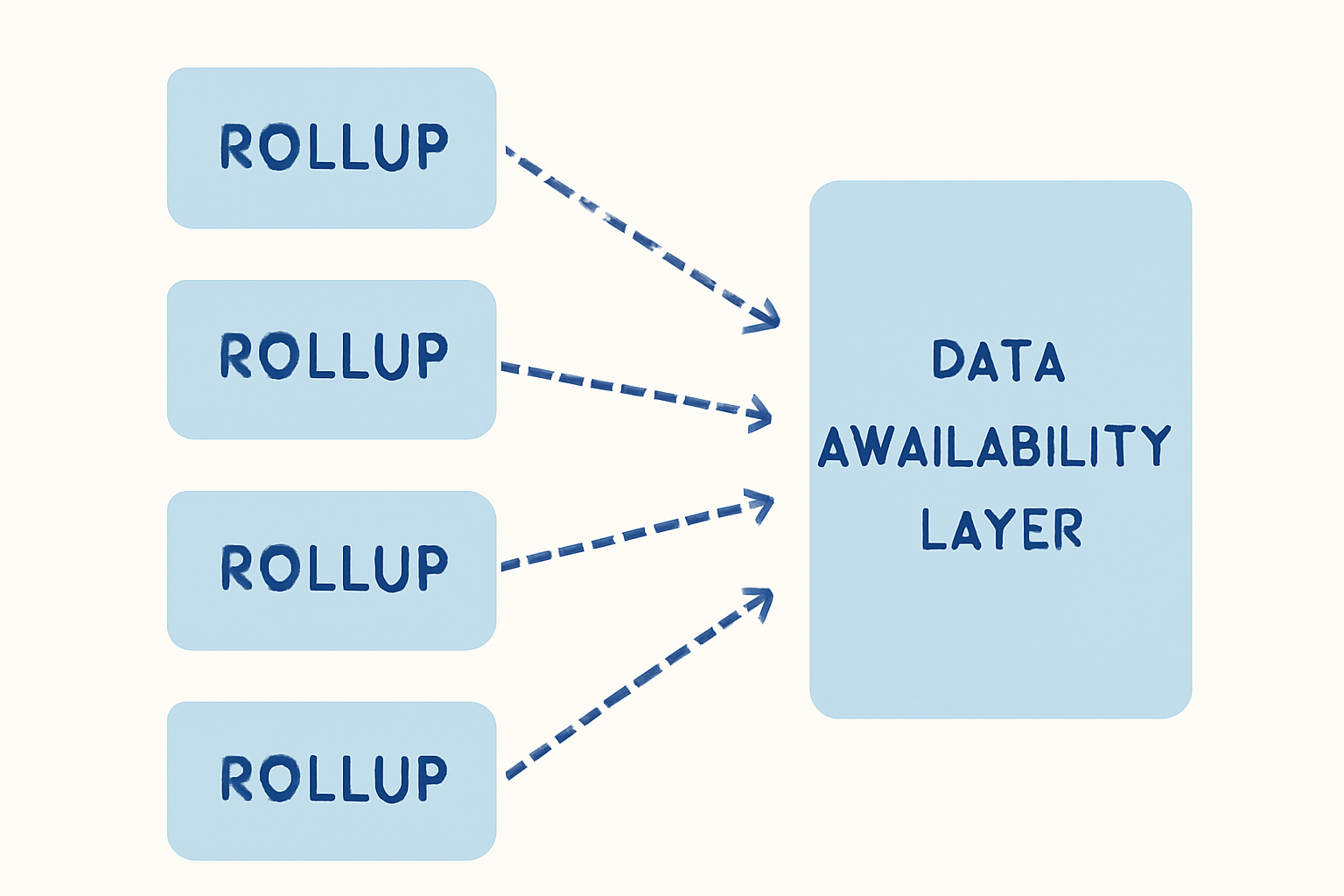

This is particularly transformative for sharded rollups, where transaction execution is distributed across multiple shards or sub-chains. Each shard posts its transaction data to a shared data availability layer, such as Celestia or Avail, which supports DAS at scale. Instead of downloading every shard’s full dataset, a prohibitive task for most validators, nodes can sample across shards and still achieve robust security guarantees.

Erasure Coding: Fortifying Data Integrity in Rollups

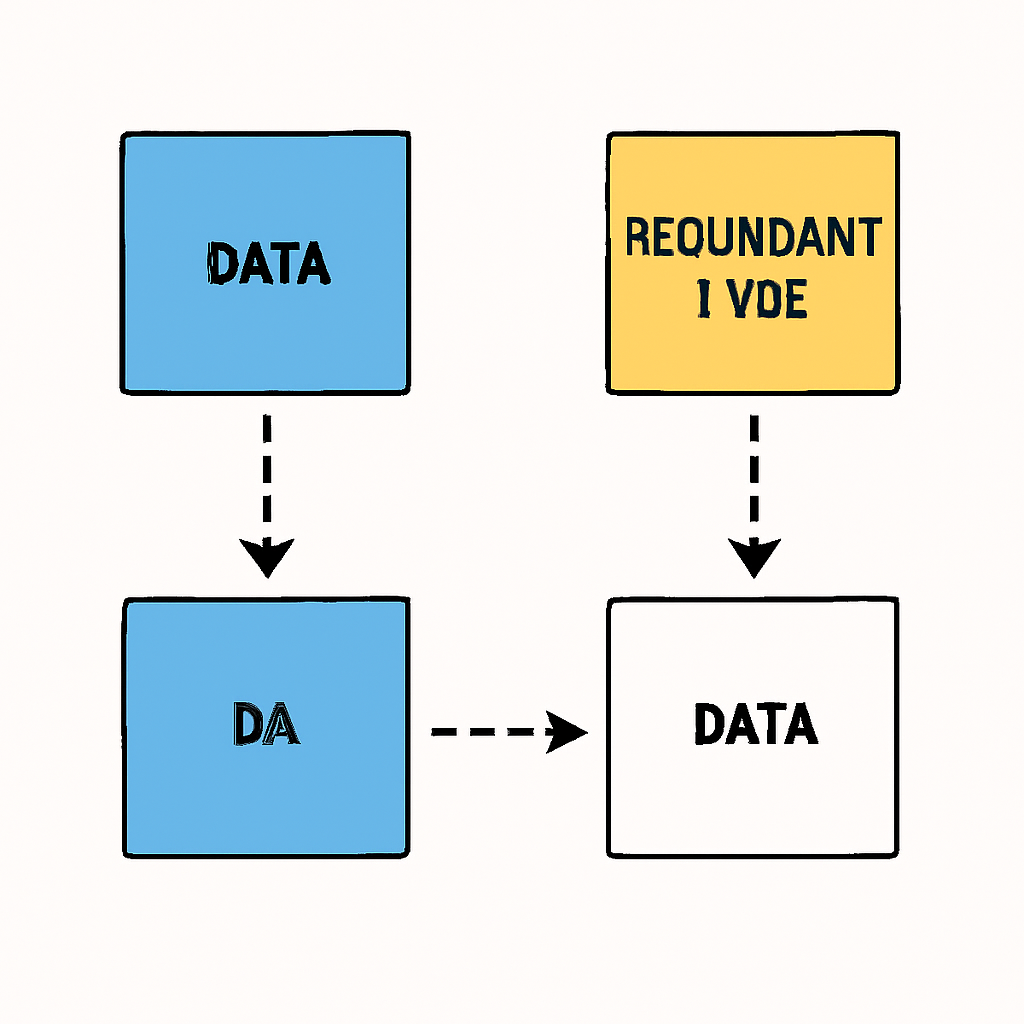

DAS is often paired with erasure coding, a technique that splits data into fragments with added redundancy. Even if some fragments are missing or withheld by malicious actors, honest nodes can reconstruct the original data from a subset of pieces. This makes it exponentially harder for adversaries to mount withholding attacks against sharded rollups relying on modular DA layers.

"Due to properties of data availability sampling, rollups plugging into Celestia as a data availability layer are able to support higher block sizes (and thus higher throughput) without sacrificing security. "

- Volt Capital’s Modular Blockchains Deep Dive

The combination of DAS and erasure coding means that even light clients, such as mobile wallets or IoT devices, can securely participate in consensus without needing supernode-level resources. This democratizes access while maintaining rigorous integrity standards across all shards.

Real-World Implementations: Celestia and Avail Set the Standard

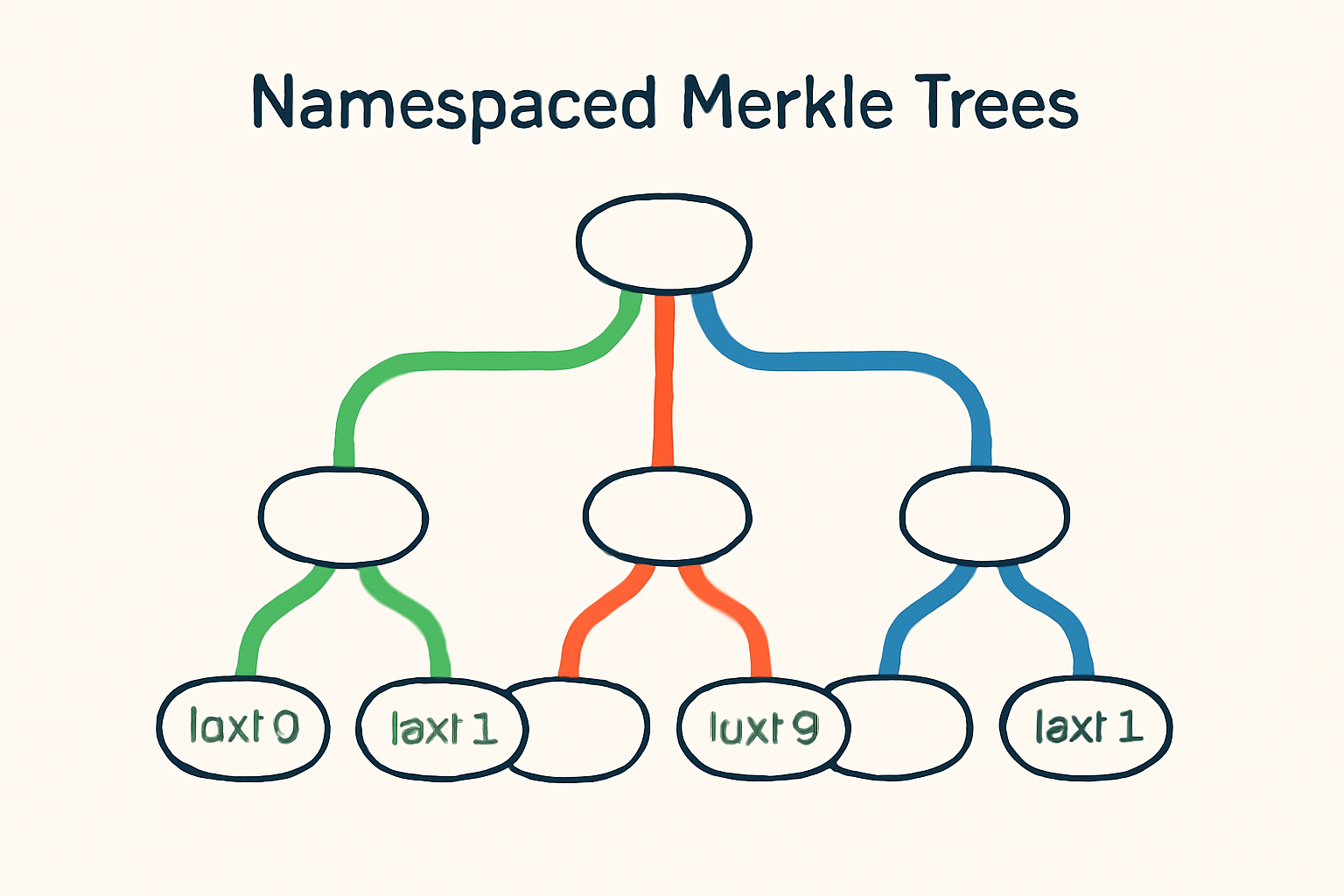

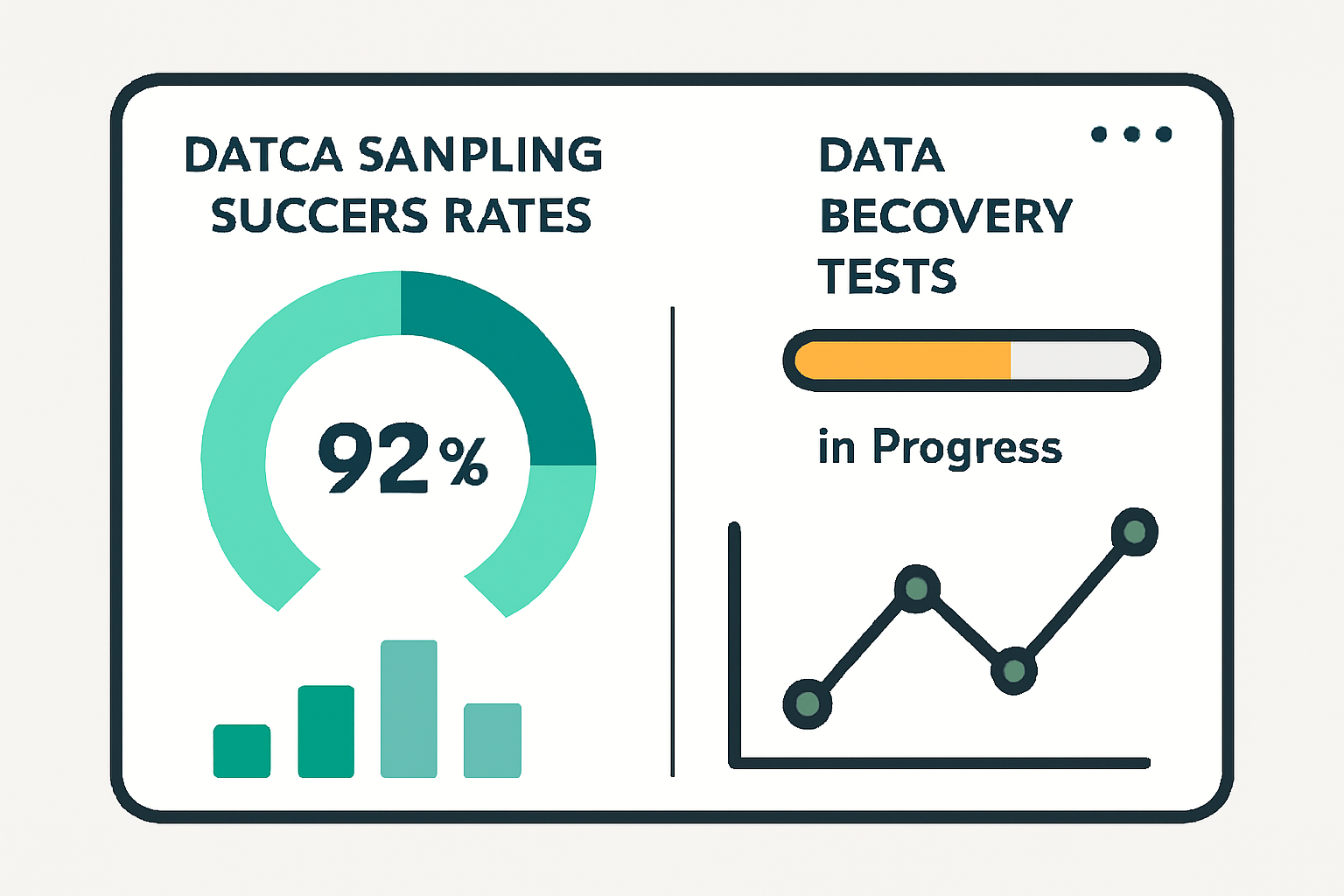

Celestia pioneered practical implementations of DAS using Namespaced Merkle Trees (NMTs), which structure block data so that light nodes can efficiently prove both inclusion and completeness for sampled segments. Meanwhile, Avail integrates DAS with erasure coding and polynomial commitments, further strengthening its role as a secure DA base layer for modular ecosystems, including OP Stack and Arbitrum rollups.

Key Benefits of DAS for Sharded Rollup Scalability

- Enables Lightweight Node Participation: DAS allows nodes to verify data availability by sampling small portions of block data, significantly reducing bandwidth and storage requirements. This empowers even resource-constrained light nodes to securely participate in the network without needing to download entire blocks.

- Facilitates Higher Throughput and Larger Blocks: By making data verification more efficient, DAS supports the use of larger block sizes and higher transaction throughput in sharded rollups, as seen in implementations like Celestia.

- Mitigates Data Withholding Attacks: When combined with erasure coding, DAS ensures that even if some data fragments are missing, the original data can still be reconstructed. This makes it much harder for malicious actors to withhold critical data from the network.

- Enhances Decentralization and Security: By lowering the resource barrier for node participation, DAS increases the number of validators and observers in the network, thereby improving decentralization and strengthening security guarantees for sharded rollups.

- Supports Modular Blockchain Architectures: DAS is integral to the design of modular blockchains, enabling specialized layers (such as execution, settlement, and data availability) to scale independently. Projects like Avail leverage DAS to provide a robust data availability layer for rollups.

This modular approach not only boosts throughput but also preserves decentralization by lowering participation barriers for validators across shards. As more networks adopt these techniques, we’re witnessing a pivotal shift toward scalable, trustless blockchain infrastructure capable of supporting mainstream applications at global scale.

Looking closer at the mechanics, data availability sampling fundamentally redefines the economic and technical landscape for sharded rollups. Since nodes can probabilistically verify data availability with minimal overhead, block producers are incentivized to publish complete data or risk having their blocks rejected by honest samplers. This cryptoeconomic alignment sharply reduces the attack surface associated with data withholding, a critical concern for any scalable blockchain network.

Security Implications and Attack Resistance

The security guarantees offered by DAS are not merely theoretical. In practice, the probability that an adversary could successfully hide unavailable data while passing random sampling checks decreases exponentially with each additional sample. For example, if a block is erasure-coded into 1,024 fragments and a node samples just 10 fragments at random, the likelihood that missing data goes undetected is astronomically low, making large-scale withholding attacks economically unfeasible.

Moreover, because DAS enables light clients to confidently participate in network consensus, validator sets can grow more decentralized. This is especially relevant for sharded rollups: each shard can be validated by a different subset of nodes without requiring massive resource commitments from each participant. The result is a mesh of cross-verified shards secured by a lean yet robust DA layer.

Future-Proofing Modular Blockchain Scalability

As modular architectures mature, DAS will play an increasingly central role in future-proofing blockchain scalability. Emerging standards like Celestia’s Namespaced Merkle Trees and Avail’s polynomial commitment schemes allow networks to scale horizontally, adding more shards or rollups as demand grows, without linear increases in validation costs.

This horizontal scaling model stands in stark contrast to legacy monolithic chains where every node must process every transaction. Instead, modular blockchains equipped with efficient DA layers and DAS can support thousands of independent rollups or application-specific shards, all benefiting from shared security without centralized bottlenecks.

Key Takeaways for Developers and Researchers

For builders considering sharded rollup architectures or evaluating DA solutions, several best practices emerge:

• Prioritize interoperability between execution environments and DA layers to maximize flexibility.

• Leverage erasure coding alongside DAS for stronger liveness guarantees.

• Monitor ongoing research in cryptographic sampling techniques and cross-shard communication protocols.

The modular thesis is now being realized not just as a theoretical ideal but as a production-ready reality powering next-generation ecosystems. With active deployments from Celestia and Avail leading the charge, and new entrants rapidly iterating, data availability sampling has become foundational for trustless scalability in web3 infrastructure.

No comments yet. Be the first to share your thoughts!